Have you ever checked your Search Console and realized a chunk of your “perfect” pages aren’t even indexed? It’s incredibly frustrating.

Right now, with AI-powered search engines like Google’s SGE processing billions of crawls every single day, having a messy site structure is a digital death sentence.

Honestly, inefficient crawling can bury even the most brilliant content like wasting up to 60% of your crawl budget according to Google Webmaster Guidelines. T

hink about that for a second. More than half of Google’s effort on your site could be going down the drain because the bot is getting lost.

But what are we actually talking about when we say crawl efficiency? It sounds like heavy technical jargon, but it’s just the art of making sure search engines like Googlebot can discover, crawl, and index your pages without wasting precious resources on junk.

At Zumeirah, we view it as building a clean, high-speed highway so the bots can get to your most important content immediately.

Why does this matter so much in 2026? Because the SEO landscape has shifted beneath our feet. We aren’t just fighting for a blue link anymore and we’re fighting to be the primary source for AI overviews.

With mobile-first indexing being the absolute standard and Core Web Vitals 2.0 raising the bar for performance, your technical foundation has to be rock solid.

Then there’s Semantic SEO which is really just a fancy way of saying we need to make sure the “internet’s brain” actually understands the context of your brand, not just the keywords you’re chasing.

In Dubai, where the market moves at 5G speeds, we know that a fast site is only half the battle. If a bot gets stuck in a redirect loop or a bloated sitemap, your 100/100 PageSpeed score won’t save you. You need a strategy that guides the bot’s attention to the pages that actually make you money.

This guide covers proven strategies to master crawl efficiency, boost indexation, and achieve high-performance SEO.

You know what? We’re going to look at exactly how to clean up your digital “waste,” fix your sitemaps, and stop those bots from wandering into dead ends. Stick around, because your crawl efficiency and your indexation rate is about to get a serious upgrade.

What is Crawl Efficiency and Why It Matters in 2026

If you’ve ever wondered why your beautifully designed pages aren’t showing up in search results then you’re likely facing a “discovery” problem.

In the UAE’s competitive market, being fast is only half the battle; being found is the other half.

Understanding Crawl Budget

Think of the “Crawl Budget” as a bank account for your website. Search engines don’t have infinite time to spend on one site. Googlebot has a limited number of pages it’s willing to crawl on your site every single day.

This budget depends on a few heavy hitters like your site’s size, your server health, and how often you’re actually adding value. If your server is lagging or your site is a maze of broken links, the bot just leaves before it finds the good stuff.

By 2026, the game has gotten even more specific. We aren’t just dealing with basic crawlers anymore. AI bots now prioritize “entity-rich” content that actually matches what a user is trying to find.

If your pages feel hollow or repetitive, these AI agents will spend their “budget” elsewhere. At Zumeirah, we focus on making sure every byte the bot spends on your site counts.

The Role of Crawl Efficiency in SEO

If Google doesn’t crawl it, Google doesn’t index it. And if you aren’t in the index then you effectively don’t exist for your customers. The impact on your traffic is massive.

Poor crawl efficiency often leads to 30% to 50% of a site’s pages staying completely unindexed. That’s nearly half of your business’s digital presence hidden in the dark.

Why is 2026 the year this becomes non-negotiable? It’s the rise of AEO (AI Engine Optimization). Search is moving toward direct, immediate answers.

For your site to be the source of those answers, the bots need to crawl your dynamic content at lightning speed.

We’ve seen that sites with a clean “machine-readable bridge” get picked up by AI overviews significantly faster than bloated, messy competitors.

Common Myths Debunked

You know what? I hear this all the time like “My site is small, so I have an unlimited crawl budget.” Honestly, that’s just not true. Even the big names like Moz and SEMrush spend a huge amount of effort making sure their crawl paths are lean. No one has an unlimited pass from Google.

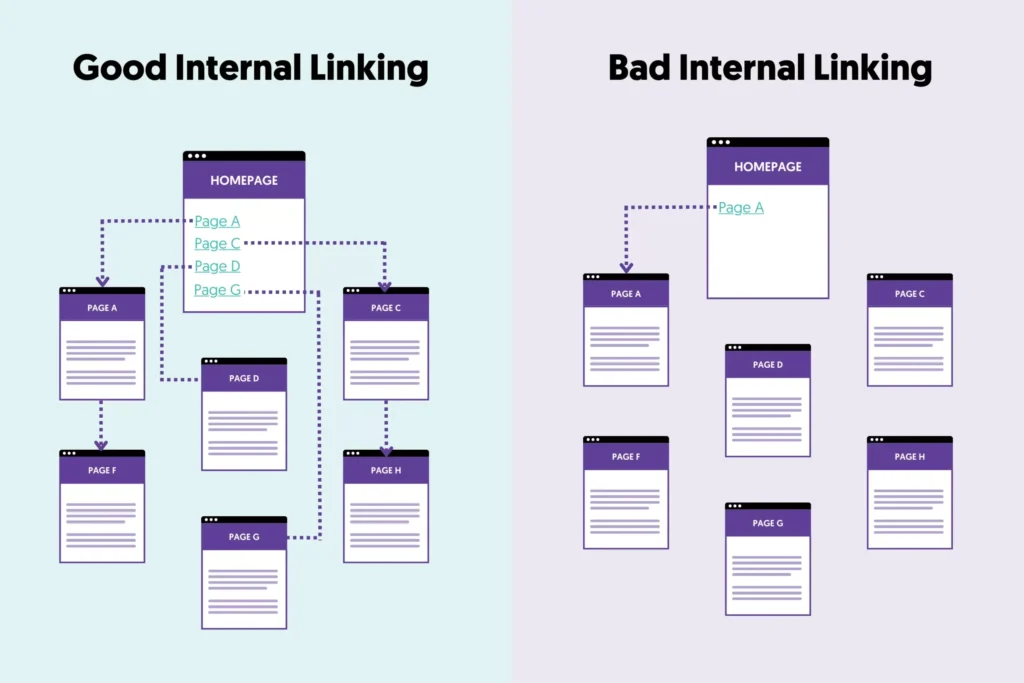

Another big myth is that a sitemap plugin solves everything.

Let me explain: a sitemap is just a map, not a guarantee. If your internal linking is a disaster or you have thousands of “junk” pages like empty categories or tag archives, a sitemap won’t save you.

Even a high-authority site can suffer from “crawl bloat” where the bot gets distracted by useless pages and misses your most important new content. It’s about being intentional, not just having a list of links.

Key Factors Affecting Crawl Efficiency

We’ve talked about what crawl efficiency is, but now we need to look at the “engine parts” that actually make it work.

If your site were a car, your site structure would be the chassis, and your server would be the engine. If one is bent or the other is stalling, you aren’t going anywhere fast.

Site Structure and Internal Linking

If a bot has to click five times to find your most important service page, it probably won’t bother. We always aim for a flat hierarchy.

This means your homepage acts as the central hub, and your main services like our Web Design or SEO packages are just one click away.

You know what? I see so many sites in Dubai using complex, deep menus that bury their best work.

Instead, try using a hub-and-spoke model. Link your main service pages to related blog posts and back again.

This creates a “short-cut” for the bot. Oh, and don’t forget breadcrumbs. They aren’t just for users; they provide a clear, secondary path for crawlers to understand exactly where they are in your digital house.

Server Performance and Site Speed

Here is a statistic that usually shocks people: when your server takes 2 seconds to respond instead of 0.2 seconds, crawlers can access 90% fewer pages during their stay. That is a massive loss of opportunity.

In 2026, Core Web Vitals are no longer just a “nice-to-have” for rankings; they are the baseline for crawl health. If your Largest Contentful Paint (LCP) is lagging beyond 2.5 seconds, Googlebot starts to think your server is struggling and backs off.

Honestly, using tools like Google PageSpeed Insights or Lighthouse isn’t just about getting a green score; it’s about proving to the bots that your site is reliable enough to handle a heavy crawl.

Technical Issues and Errors

Nothing wastes a crawl budget faster than a 404 error. Research shows that broken links account for roughly 15% of crawl errors. When a bot hits a dead end, it’s like running into a brick wall and it stops, turns around, and leaves.

Then you have the issue of duplicate content.

If you have five different URLs showing the same content (maybe due to URL parameters or tracking codes), you’re forcing the bot to do five times the work for zero extra value. Also you should know how to write SEO Optimized Content for google ranking.

And as we move further into 2026, mobile-friendliness is non-negotiable.

If your mobile version is slow or “heavy,” the Smartphone Googlebot will significantly reduce its crawl frequency.

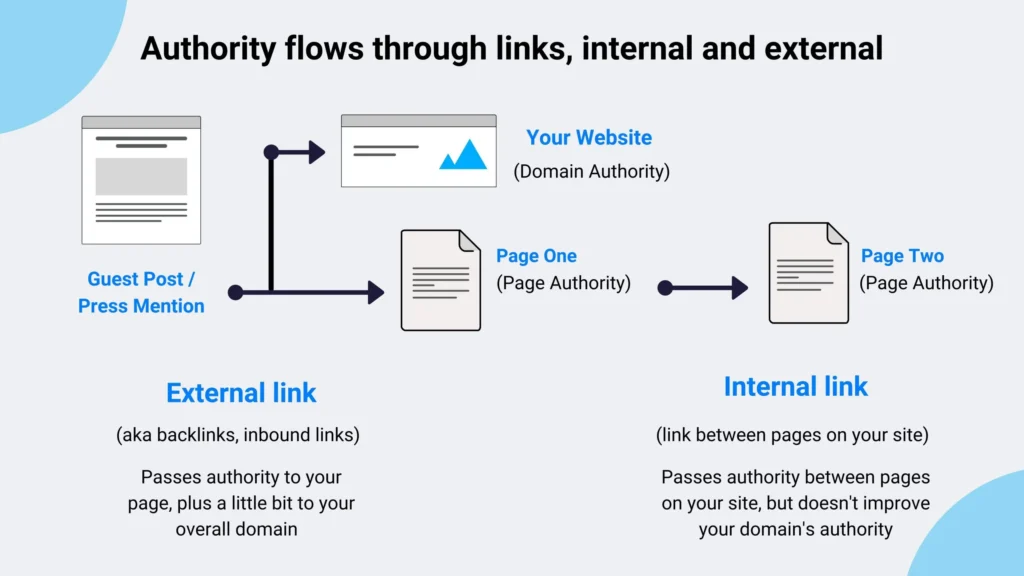

Backlinks and Authority

You might not think of backlinks as a “technical” factor, but they are actually one of the strongest discovery signals. When a high-authority site links to you, it’s like a massive “Enter Here” sign for Googlebot.

A new page with zero backlinks might sit unindexed for weeks. But that same page, with just two or three links from established sites, can be picked up in days.

Why? Because Google assumes that if authoritative sites trust your content, its bots should be spending more time there.

Crawl Efficiency: Factors vs. Impact

| Factor | Impact on Crawl Budget | Top-Tier Optimization Tip |

| Flat Site Structure | High | Keep important pages within 3 clicks of the home page. |

| Server Speed (TTFB) | Critical | Use a CDN to serve data from the closest local server in the UAE. |

| 4xx/5xx Errors | High Waste | Regularly audit your site for broken links and fix server timeouts. |

| Duplicate Content | Moderate Waste | Use canonical tags to tell the bot which URL is the “boss”. |

| Backlink Profile | High Priority | Focus on a few quality links rather than dozens of low-value ones. |

How to Optimize Crawl Efficiency: Step-by-Step Guide

Alright, Knowing why crawl efficiency matters is one thing, but actually getting under the hood and tuning the engine is where the magic happens.

If you’re ready to move from “unindexed and invisible” to “crawled and dominant,” follow these steps exactly as we do at Zumeirah.

Audit Your Site’s Crawlability

You can’t fix what you haven’t measured. First, head over to your Google Search Console (GSC) and look for the Crawl Stats report.

This is the closest thing you’ll get to a direct conversation with Googlebot. It tells you exactly how many requests are being made and where the bot is hitting a wall.

For a deeper dive, I always recommend running a crawl with Screaming Frog or Sitebulb. These tools mimic a search engine and will flag every redirect chain, broken link, and “orphan page” (pages with no internal links) that are currently draining your budget.

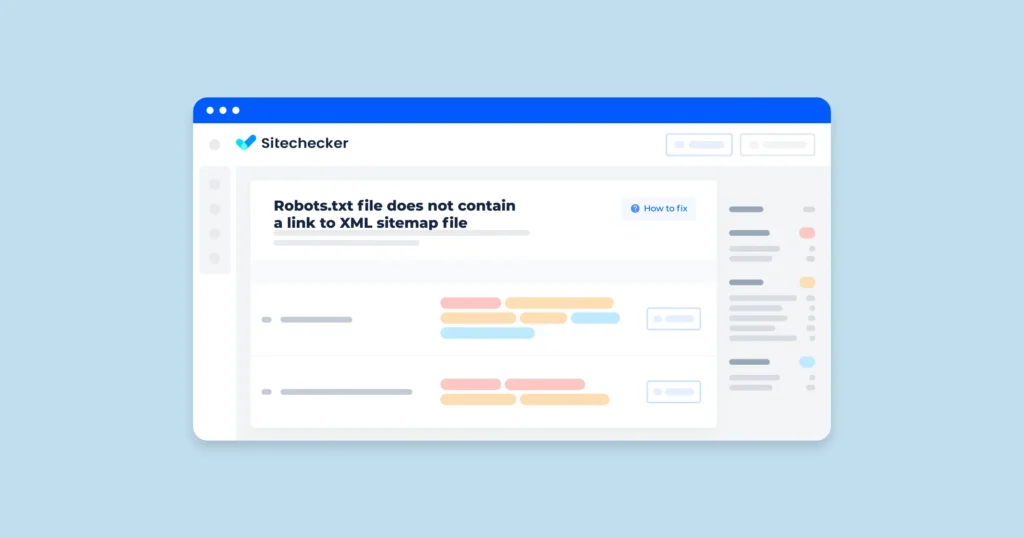

Once you have that list, your first job is validation: make sure your robots.txt isn’t accidentally blocking your most important service pages.

Honestly, you’d be surprised how often a simple “Disallow: /” stays in the file after a site launch.

Enhance Robots.txt and Sitemaps

Your robots.txt file is your first line of defense. It shouldn’t just be a list of “no-entry” signs; it should be a strategic guide.

You want to disallow “junk” areas like /admin/, /wp-includes/, or any internal search result pages that offer zero value to a searcher.

When it comes to your XML Sitemap, don’t just set it and forget it. Use <priority> tags to tell Google which pages are your heavy hitters.

For a Zumeirah site readers, we prioritize the homepage and main service pages at 1.0, while older blog posts might sit at 0.5.

Here’s a quick example of a clean, high-performance robots.txt structure:

Plaintext

User-agent: *

Disallow: /wp-admin/

Disallow: /search/

Allow: /wp-admin/admin-ajax.php

Sitemap: https://zumeirah.com/sitemap_index.xml

Improve Internal Linking and Site Architecture

If your site structure is a maze, the bot will give up. We focus heavily on fixing orphan pages like those lonely URLs that have no internal links pointing to them. If you don’t link to it, why should Google crawl it?

You also need to optimize your anchor text. Instead of using “click here,” use descriptive terms like “custom web design in Dubai“. This helps the bot understand the context of the page it’s about to visit before it even gets there.

Boost Page Speed and Technical SEO

You know what? Speed is the ultimate lubricant for crawl efficiency. If your pages load slowly, the bot spends all its “time budget” waiting for one page to load instead of crawling ten.

- Compress your images: Use WebP formats to keep file sizes tiny without losing quality.

- Minify JS/CSS: Strip out the extra space in your code so the “machine-readable bridge” is as lean as possible.

- Use a CDN: Especially if you’re targeting the UAE market, serving your site from a local server reduces latency and keeps the bots happy.

- Schema Markup: Don’t forget to inject nested JSON-LD. This helps AI search engines recognize your business entities immediately, which is a massive shortcut for their indexers.

Manage Crawl Budget for Large Sites

If you’re running a massive site with thousands of pages, you have to be even more aggressive. You need to prioritize fresh content. Use log file analysis to see exactly which pages the bots are visiting most frequently.

If they are spending too much time on old, irrelevant archives, it’s time to use noindex tags or consolidate those pages.

Remember, crawl efficiency isn’t a one-time setup. It’s a continuous process of trimming the fat and making sure your site remains the fastest, most logical path for the machines that drive our search results.

Advanced Crawl Efficiency Strategies for 2026

If you’ve made it this far, you’ve already outpaced 90% of your competitors who are still stuck in 2023.

But to really dominate the Dubai market in 2026, we need to talk about the “bleeding edge” of crawl efficiency. We’re moving beyond just fixing broken links and into the realm of AI-readiness.

AI and Semantic Optimization

Search is no longer about matching strings of text; it’s about understanding entities. In 2026, your crawl efficiency is directly tied to how quickly an AI agent can map out who you are, what you do, and where you do it.

You know what? I always tell our clients at Zumeirah that basic Schema isn’t enough anymore. You need to use nested JSON-LD to define relationships between people, places, and services. This acts as a “cheat sheet” for Google’s SGE and other AI Overviews.

By clustering your content around specific user intents rather than just keywords, you build topical authority.

When the bots recognize you as a “subject matter expert” in your niche, they naturally increase your crawl frequency because they trust your data is worth the resources.

Mobile-First and Progressive Enhancement

We live in a mobile-first world, especially here in the UAE where everyone is browsing on the go. But for 2026, we’re looking at Progressive Enhancement.

Integrating a Progressive Web App (PWA) framework isn’t just for a better user experience it’s a crawl efficiency goldmine.

PWAs allow for aggressive caching and service workers that handle data much more efficiently than a standard mobile site.

This means when a crawler like Smartphone Googlebot hits your site, it can zip through your pages with almost zero server friction.

Honestly, if you aren’t thinking about how your mobile architecture supports faster crawls, you’re essentially leaving money on the table.

Monitoring and Tools

You can’t manage what you don’t monitor. While Google Search Console remains our “North Star,” you should be cross-referencing that data with high-level crawl reports from Ahrefs or SEMrush.

These tools often pick up on nuances like subtle redirect loops that GSC might miss.

Here’s a tip most people overlook like you can actually influence your crawl rate in GSC.

While Google usually handles this automatically, if you’ve recently upgraded your server performance (which you should if you’re with Zumeirah!), you can signal to Google that your site is ready for a more intense “handshake”.

Automation is your friend here and set up alerts so you’re notified the second your crawl error rate spikes.

Case Studies

Let’s look at a hypothetical scenario based on real industry data. Imagine a mid-sized e-commerce site in Dubai that was struggling with unindexed products. They had over 10,000 pages, but only 4,000 were showing up in search.

By implementing a flat site hierarchy, fixing their redirect chains, and using canonical tags to eliminate duplicate content waste, they managed to reduce their “crawl waste” by 40%.

Within three months, their indexation rate jumped from 40% to 85%. This didn’t just happen by magic and it happened because they stopped forcing Googlebot to wander through a digital desert and gave it a clear, high-speed road to their best content.

Common Mistakes to Avoid in Crawl Efficiency

Even with the best intentions, it’s remarkably easy to accidentally sabotage your own crawl efficiency.

In our experience at Zumeirah, we’ve seen brilliant designs in Dubai fail to rank simply because of one or two “silent killers” in the backend.

Let’s look at the mistakes you absolutely need to dodge to keep your 2026 SEO strategy on track.

The Robots.txt Trap: When Over-Blocking Backfires

You know what? The most common mistake we see is being too aggressive with robots.txt. It’s tempting to block everything that isn’t a “money page,” but in 2026, that’s a dangerous game.

If you block your CSS or JavaScript files, Googlebot can’t actually “see” your site. It just sees a broken skeleton.

This is especially bad for AI search visibility. If the bots can’t render your page properly because you’ve disallowed the “ingredients” of your design, they won’t cite you in AI Overviews.

Honestly, just one accidental Disallow: /blog/ can wipe out months of content effort in a single afternoon.

The Silent Danger of Ignoring Your Log Files

Google Search Console is great, but it’s not the full story. If you aren’t looking at your server log files, you’re basically flying blind. Logs show you exactly where the bots are actually spending time in real-time.

I’ve seen cases where GSC looks clean, but the log files reveal that Googlebot is wasting 70% of its budget on old, irrelevant parameter URLs from an outdated plugin. Ignoring these logs means you’re missing the “waste” that’s happening right under your nose.

At Zumeirah, we treat log analysis like a digital health check like it’s the only way to catch crawl spikes and bot-traps before they tank your rankings.

Redirect Disasters and Infinite Loops

We all know redirects are necessary, but long chains are crawl budget poison. Every time you redirect Page A to B, and then B to C, you’re forcing the bot to do triple the work. Eventually, Googlebot just gives up and leaves.

And don’t even get me started on redirect loops and that’s where Page A points to Page B, and Page B points back to A.

It’s a digital “dead end” that frustrates bots and users alike. Always use direct 301 redirects whenever possible and clean up those old chains.

E-E-A-T: Why Trust Dictates Your Crawl Frequency

You might be thinking, “What does my author bio have to do with technical crawling?” Actually, quite a lot. In 2026, E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) isn’t just a quality guideline; it’s a gatekeeper.

Google and AI agents prioritize crawling sites they actually trust. If your site has no author bios, no clear citations, and no verifiable credentials, the bots will visit you less often.

Why would they waste resources on a “faceless” brand when they can crawl a trusted expert instead?

Including clear author bylines and linking to your LinkedIn profile signals that there is a real human expert behind the keyboard, which encourages search engines to index your content faster.

Wrapping Up: Your Blueprint for a High-Performance 2026

So, where does that leave us? Honestly, mastering crawl efficiency isn’t just a “nice-to-have” technical chore anymore and it’s the actual backbone of your digital existence in 2026.

We’ve covered a lot from building that “machine-readable bridge” with a flat site hierarchy to making sure your robots.txt isn’t accidentally slamming the door in Googlebot’s face.

The takeaway here is simple like if you want to rank, you have to be easy to find, easy to understand, and above all fast.

By cleaning up your “crawl bloat,” fixing those draining redirect chains, and staying on top of your server performance, you aren’t just pleasing a bot.

You’re proving to the entire search ecosystem that your brand is a high-value, high-trust entity that deserves the spotlight.

Looking ahead, the future of SEO is clearly AI-driven. As we move further into 2026, we’re going to see even more sophisticated AI agents that prioritize context and topical authority over simple keyword matching.

If your technical foundation is messy, you’ll be left behind while your cleaner, more efficient competitors are cited as the primary sources in AI Overviews and SGE results.

Don’t let your hard work get buried in the digital noise. Start by hopping into your Google Search Console today and checking those Crawl Stats.

If things look a bit cluttered, use the steps we’ve outlined to trim the fat and sharpen your focus.

And hey, if you’re looking to truly dominate the Dubai or Sharjah market and need a world-class team to build that high-performance engine for you, we’re here to help. At Zumeirah, we don’t just build websites; we build the future of search.

Frequently Asked Questions

You know what? Even after a deep dive into technical SEO, there are always a few lingering questions. It’s a lot to take in, especially with the way the Dubai market moves so fast.

Here are the most common things people ask us at Zumeirah when we start talking about the “machine-readable bridge” we’re building for them.

What is crawl efficiency, really?

Honestly, it’s just a measure of how effectively search engine bots and now AI agents can move through your site without getting stuck. Think of it like a “friction score.” High crawl efficiency means Googlebot can find, render, and index your most important pages quickly, while low efficiency means it’s wasting its time in your “digital basement” on broken links or duplicate content.

How do I check my site’s crawl budget?

The quickest way to get a pulse on this is through Google Search Console. Go to Settings > Crawl Stats and open the report. You’ll see a graph of “Total crawl requests.” If that number is significantly lower than your total page count, or if you see a lot of 404/5xx errors in the “By response” table, you’ve got a crawl budget leak that needs fixing.

Does site speed actually affect how often I’m crawled?

Absolutely. It’s one of the biggest factors. If your server takes two seconds to respond instead of 200 milliseconds, Googlebot will crawl significantly fewer pages during its stay. Why? Because Google has to pay for the electricity and computing power to run those bots. If your site is “heavy” and slow, they’ll simply visit less often to save resources.

What are the best tools for optimizing this in 2026?

We have our favorites, of course. For first-party data, Google Search Console is your North Star. For deep technical audits, Screaming Frog or Sitebulb are the industry workhorses that reveal the hidden redirect chains and orphan pages. If you’re looking for all-in-one management, Ahrefs and Semrush have excellent crawl reports that help you benchmark against your competitors in the UAE.

How does AI change the way my site is crawled?

Here’s the thing: we aren’t just optimizing for Googlebot anymore. We’re optimizing for AI agents like GPTBot and Google-Extended. These agents are much more selective; they hunt for “entity-rich” content and structured data that they can easily turn into an AI Overview or a direct answer. If your site is hard to crawl, these AI systems will simply skip you and cite a competitor who has a cleaner, more accessible technical foundation.