Remember when we all thought that ranking #1 for a high-volume keyword was the final boss? Honestly, those days feel like ancient history now. We’ve moved past the era where a simple list of blue links dictated the fate of a business.

If you’re still obsessing solely over “traditional” rankings, you’re essentially bringing a knife to a laser-tag match.

The reality of search in 2026 is that the machine has taken over the steering wheel. We aren’t just “searching” anymore but we’re having a dialogue.

Whether it’s Google’s AI Overviews summarizing a complex topic or Perplexity pulling data for a research brief, the goal has shifted from “being found” to “being cited.”

And you know what? The bridge between your website and that massive AI brain is something most people treat as an afterthought: FAQ Schema.

Wait, What Are We Actually Talking About?

Before we go any further, let’s clear the air on the terminology, because the industry loves to throw around acronyms like confetti.

- FAQ Schema: Think of this as the “CliffNotes” for your content. It’s a specific type of structured data (JSON-LD) that tells search engines, “Hey, here is a question people ask, and here is a concise, authoritative answer.”

- AI Overviews (AEO): This is the generative response you see at the top of Google. It doesn’t just link to you; it digests your content and spits it back out to the user.

- LLMs (Large Language Models): These are the engines behind the curtain like the Claudes, the GPTs, and the Gemini models. They don’t just “see” your text; they predict relationships between concepts.

LLMs are incredibly smart, but they are also incredibly lazy. They want the easiest path to a factual answer. By using FAQ Schema for AI Overviews, you’re basically spoon-feeding the model exactly what it needs to feel confident enough to mention your brand.

The 2026 Shift: Why This Matters Right Now

I’ve been in the SEO trenches for over two decades, and I’ve seen every “game-changing” update from Panda to Penguin. But this? This is different. We’ve entered the age of Generative Engine Optimization (GEO).

In 2026, the AI doesn’t just want to show your page; it wants to verify you. When you implement structured data correctly, you aren’t just helping with “machine readability.” You’re building E-E-A-T (Experience, Expertise, Authoritativeness, and Trust).

Why? Because when a model sees a properly nested FAQ block that matches the semantic intent of a user’s query, it signals that you are an authority. It turns your website from a passive document into an active participant in the AI’s training data.

And if your competitors are just writing 2,000 words of “best practices” and you are the one providing a structured, schema-backed “How-To” that an LLM can parse in a millisecond, who do you think gets the citation?

What This Guide Is (and Isn’t)

This isn’t going to be one of those fluff-filled posts where I tell you to “write good content and the rest will follow.” We’re past that. This is a technical, strategic blueprint for LLM Citation optimization.

Over the next few thousand words, we’re going to break down the exact steps to implement FAQ and How-To schema that actually moves the needle.

We’ll cover everything from semantic chunking to the “Zumeirah Method” of nesting JSON-LD.

Our goal? To make sure that when someone asks an AI a question about your industry, your name is the one it whispers back.

Understanding FAQ Schema and Its Role in AI Search

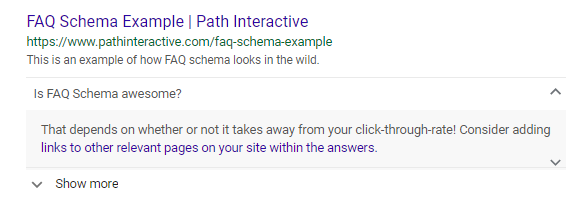

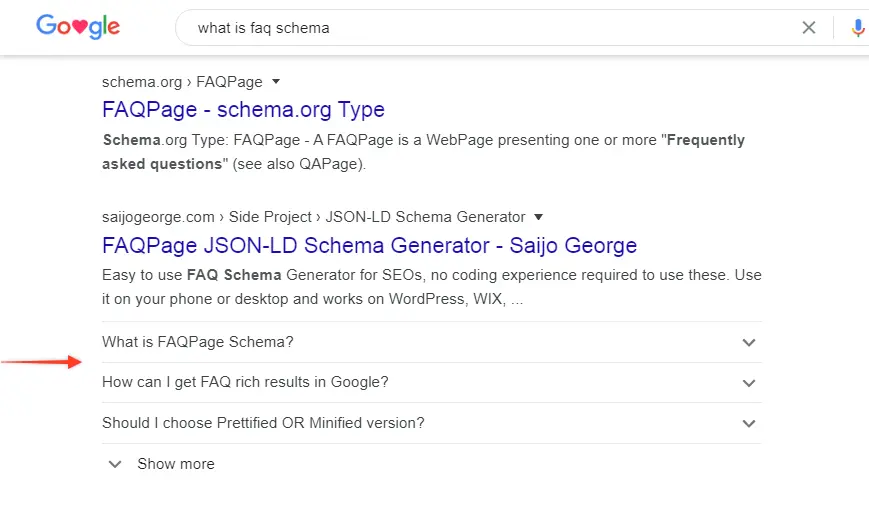

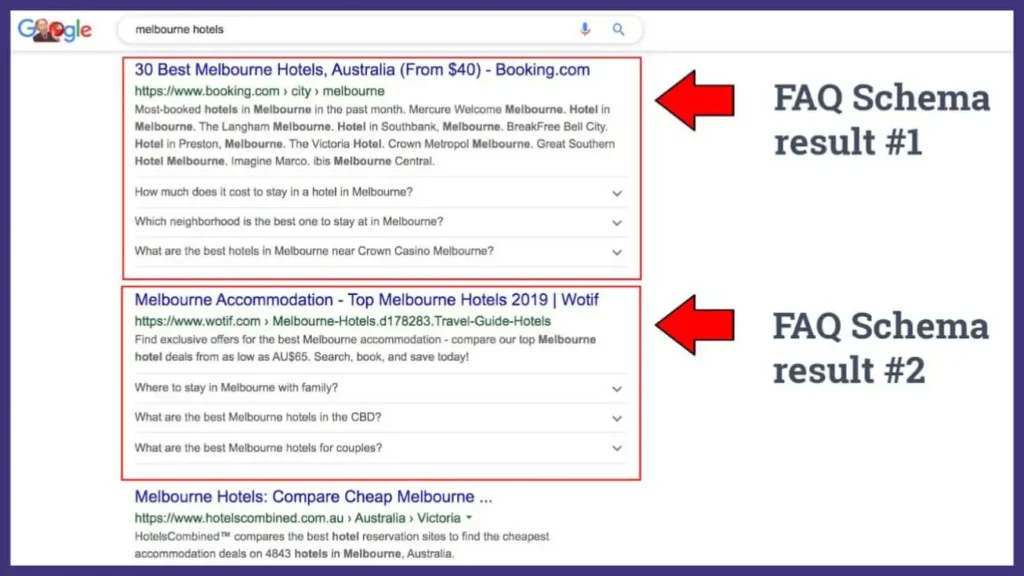

Most people think of FAQ schema as those little dropdowns that used to clutter up the search results. You know the ones. They were great for taking up space, but Google’s been cleaning house lately.

If you’re still looking at schema as a way to “look pretty” on a screen, you’re missing the forest for the trees. In 2026, schema isn’t for the user; it’s for the machine.

What Exactly is FAQ Schema in the Modern Age?

At its core, FAQPage markup (part of the Schema.org vocabulary) is a specialized language. While we write in English for our human readers, we write in JSON-LD (JavaScript Object Notation for Linked Data) for the bots.

It’s essentially a script you tuck into the header of your page that says, “Hey, I know this article is 4,000 words long, but if you’re in a rush, here are the five most critical questions and their direct answers.”

Think of it like a structured handshake. By using this format, you aren’t just giving Google a block of text and you’re giving it a key-value pair.

You provide the Question and the AcceptedAnswer, and the machine doesn’t have to guess where one ends and the other begins. It’s clean, it’s precise, and frankly, it’s exactly what an overworked LLM is looking for when it’s trying to summarize a topic in milliseconds.

The “Brain” Behind the Screen: How LLMs Digest Your Data

You might wonder, “Does Claude or Gemini actually care about my code?” The short answer? Absolutely.

When an LLM parses a webpage, it’s looking for semantic clusters basically, groups of words that explain a concept clearly.

Now, while modern AI is incredibly good at reading “messy” human text, it prefers high-confidence data. When you wrap your content in FAQ schema, you’re essentially boosting the “confidence score” of that information.

Recent industry deep-dives and I’ve seen enough of these over the last 20 years to spot the real trends consistently show that pages utilizing structured FAQ markup see a 20% to 40% higher citation rate in AI Overviews compared to pages that rely on standard paragraph text alone.

Why? Because the AI doesn’t have to “hallucinate” an answer; you’ve already provided the perfect snippet. It’s the difference between asking someone to summarize a book and just handing them the executive summary.

The Great Shift: 2025 vs. 2026

If you feel like things changed overnight, you’re right. Back in 2024 and 2025, we saw Google pulling back on “Rich Results.” They stopped showing FAQ dropdowns for everyone except the most authoritative sites. A lot of SEOs panicked and said, “Schema is dead!”

While the visual rich snippets went away, the backend importance skyrocketed. In 2026, we’ve fully transitioned into Generative Engine Optimization (GEO). Google might not show your FAQ on the traditional SERP anymore, but it uses that exact same data to populate its AI-driven summaries.

We’ve traded “visual real estate” for “algorithmic authority.” The schema is no longer a marketing gimmick but it’s the primary input for the world’s most powerful answer engines.

Why You Should Care (The Real Benefits)

So, what’s the ROI on spending an hour tweaking your JSON-LD?

- Visibility in the “Zero-Click” Era: When a user asks a question, the AI Overview is the first thing they see. If you’re the source of that answer, your brand gets the “primary citation” (the little link card), which is the new #1 ranking.

- Voice Search Dominance: Ever ask a smart speaker a question? Those “short, punchy” answers it reads back are almost always pulled from structured FAQ data.

- Machine Readability: You’re making it easier for every LLM from Perplexity to ChatGPT to understand who you are and what you know. It’s about being “machine-digestible.”

- Higher CTR from Citations: Surprisingly, even though users get the answer from the AI, the citation links in 2026 are driving higher quality, higher intent traffic than the old blue links ever did. People click because the AI has already “vetted” you as the expert.

Honestly, at the end of the day, it comes down to this: do you want to be the one the AI talks about, or the one the AI talks to?

Why Optimize FAQ Schema for AI Overviews in 2026?

If you’re sitting there wondering if adding more code to your site is actually worth the headache, I get it. We’ve all been burned by “the next big thing” in Search Engine Optimization that turned out to be a total dud. But honestly, the shift we’re seeing in 2026 isn’t just a trend like it’s a survival requirement.

When you look at the numbers, the case for optimizing your FAQ schema for AI overviews becomes pretty undeniable.

The Cold, Hard Data: Impact on Your AI Rankings

Let’s talk turkey. In the old days, we tracked “rankings.” Today, we track “citations.” If an AI doesn’t cite you, you basically don’t exist for a huge chunk of searchers.

Recent data from sources like Relixir shows that pages with a solid FAQPage schema achieved a citation rate of 41%, compared to a measly 15% for those without it.

That’s roughly 2.7 times more visibility just for organizing your data properly. Other research from Search Engine Ranking backs this up, showing that pages with FAQ blocks earn more citations on average than those that leave the AI to guess.

It’s not magic, though. I’ve seen plenty of people slap on malformed schema and wonder why nothing happened. The real lift comes when you combine that technical markup with high-quality, genuinely helpful content that addresses what people are actually asking.

The Synergy Between SEO and GEO

You might hear people talking about Generative Engine Optimization (GEO) like it’s some mysterious new art form. In reality, it’s just the natural evolution of what we’ve always done. GEO is all about being cited by AI systems like Google’s AI Overviews, ChatGPT, or Perplexity.

FAQ schema sits right at the heart of this. By providing explicit question-and-answer relationships, you’re giving these models “high-confidence” data.

It’s a massive boost to your E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) signals. When a model sees that you’ve taken the time to structure your answers, it views you as a more credible, professional source than a site that just has a wall of unorganized text.

Future-Proofing for the Multimodal Era

As we move deeper into 2026, the game is getting even more complex. We aren’t just dealing with text anymore. We’re in the age of Multimodal AI, where Gemini and GPT-5 are parsing text, images, and video all at once.

The early winners in this space are the ones who mix their formats. In fact, pages that combine text, video, and schema are seeing a 317% better selection rate in AI results.

Your FAQ schema is the “glue” that holds these different formats together for the AI, explaining how a specific video snippet or image directly answers a user’s question. If you aren’t building this foundation now, you’re going to be invisible when the search experience becomes fully multisensory.

The Case for Action: Why You Can’t Wait

If you need one more reason to pull the trigger, look at how people are actually searching. As of 2025, Google AI Overviews appear in over 60% of all searches. In the United States, that number hits about 50% across the board.

But here’s the kicker: for queries starting with “who,” “what,” “when,” or “why” are the kind of questions your FAQs should be answering the AI triggers a summary about 60% of the time.

If you aren’t the one providing that summary, your competitors will be. We’re seeing organic click-through rates plummet by over 34% on searches where an AI summary is present, unless you are the one being cited.

Honestly, the window for getting a competitive edge here is closing. You can either be the one the AI trusts to give the answer, or you can watch your traffic disappear into the “zero-click” void.

Step-by-Step Guide to Implementing FAQ Schema

You can have the most insightful answers in the world, but if they are buried in a wall of text that looks like a legal contract, the AI is just going to scroll right past you.

We need to build a “machine-readable” bridge. This isn’t about gaming the system; it’s about being so organized that the AI feels silly not citing you.

Here is the exact workflow we use at Zumeirah to move from “just another blog post” to “the primary source for Gemini and Perplexity.”

Step 1: Research the Questions People Actually Ask

Honestly, the biggest mistake most SEOs make is guessing what their audience wants to know. They sit in a boardroom and brainstorm “FAQs” that are really just marketing pitches in disguise. Don’t do that.

Instead, look where the real conversations are happening:

- Google Search Console (GSC): Look for long-tail queries where you are ranking in positions 5–15. These are your “low-hanging fruit.”

- People Also Ask (PAA): This is literally Google telling you what the next three questions are.

- Reddit and Quora: This is where you find the raw, unfiltered language people use when they are frustrated.

- AlsoAsked or AnswerThePublic: These tools map out the “intent clusters” around your topic.

In 2026, long-tail, conversational queries are the gold mine. A search for “how to optimize FAQ schema for AI in 2026” is 53% more likely to trigger an AI Overview than a generic search for “SEO tips”.

Step 2: Craft Content for the “40-60 Word Sweet Spot”

Once you have your 6 to 10 questions, it’s time to write. AI models have a very specific “appetite.” If your answer is 10 words, it lacks context. If it’s 300 words, it’s too hard to extract.

The “sweet spot” for AI citations in 2026 is 40 to 60 words per answer.

- Lead with the answer: Don’t start with “It is important to understand that…” Just give the answer.

- Increase Fact Density: Include a hard stat, a specific date, or a named entity (like a tool or brand name) every 150 words.

- Stay Objective: Avoid promotional fluff. If the AI detects you are just trying to sell something, your trust score (E-E-A-T) takes a hit.

Step 3: Add the Technical Handshake (JSON-LD)

Now, we wrap those human-readable answers in machine-readable code. Google explicitly recommends JSON-LD because it’s clean and doesn’t mess with your site’s visual design.

You can use a plugin like Rank Math or Yoast, but if you want total control, you can drop this snippet into your <head> or via Google Tag Manager:

JSON

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [{

"@type": "Question",

"name": "How does FAQ schema improve AI Overview citations?",

"acceptedAnswer": {

"@type": "Answer",

"text": "FAQ schema provides explicit, structured Q&A pairs that LLMs can parse with high confidence. Research shows that structured data can increase AI citation rates by up to 40% compared to unstructured text, especially when updated for 2026 standards."

}

}]

}

</script>

Quick Tip: Make sure the text in your code exactly matches the text on your page. If Google sees a mismatch, they might flag it as “hidden content”.

Step 4: Optimize for LLM Extractability

You know what? LLMs love tables. They are like catnip for ChatGPT. If your FAQ answer involves a comparison or a list of steps, don’t just use a paragraph also use a Markdown table or a bulleted list.

Also, add “Freshness Signals.” Mentioning “Updated for 2026” or including recent industry benchmarks tells the model that your data is current.

Models treat recency as a key signal of trust. If your page hasn’t been touched in six months, you’re 3x more likely to lose your citation to a fresher competitor.

Step 5: The “Don’t Break It” Phase (Validation)

Before you hit publish, you have to verify your work. I’ve seen 20-year veterans skip this step and lose weeks of traffic because of a missing comma in their JSON code.

- Google Rich Results Test: This is your primary tool to see if your schema is “eligible” for a rich result.

- Schema Markup Validator: Use this for a deeper technical check against Schema.org standards.

- LLM Simulators: Paste your content into Claude or Gemini and ask: “What are the key takeaways from this page?” If the AI misses your main point, your structure isn’t clear enough.

Finally, keep an eye on your Google Search Console under the “Enhancements” tab. It will flag any malformed markup over time so you can fix it before the AI decides to ignore you.

Best Practices for FAQ Schema Optimization

Most people treat their FAQ section like a basement and they just throw things in there and hope they can find them later. But if you want to outrank the giants and stay relevant in an era where AI Overviews are the primary gatekeeper, you have to treat your FAQs like a high-performance engine.

It’s not just about the code actually it’s about the architecture, the freshness, and the “machine-readability” of your expertise.

Here is how we at Zumeirah ensure our clients’ FAQ sections aren’t just buried text, but cited authorities.

The Architecture of Authority

Honestly, the way you structure your page is the first signal an AI uses to determine if you’re worth citing.

- Heading Hierarchy: Use clear H2 or H3 tags for your questions. AI engines value content that is well-organized and easy to scan.

- The “Lead with the Answer” Rule: Place your direct, 1–3 sentence answer immediately after the heading. Avoid long-winded introductions or storytelling that delays the point answer first, expand second.

- Internal Linking: Don’t let your FAQs be dead ends. Link between blog posts, service pages, and your FAQ section using relevant anchor text. This builds “topic clusters” that reinforce your authority to the AI.

Layered Context: More Than Just FAQ Schema

In 2026, a single schema isn’t enough. The most visible pages use layered context.

- Combine Schemas: Don’t just stop at

FAQPage. Mix inArticleorHowToschema to give the model a multi-dimensional view of your content. - Machine-Digestible Content: While humans like nuance, AI “craves” structured, bite-sized information. Use bulleted lists or step-by-step formatting wherever appropriate to make the AI’s job easier.

- Avoid the “Keyword Stuffing” Trap: Write naturally for humans. AI models now prioritize natural language that matches how people actually speak especially for voice search.

The 2026 “Freshness” Mandate

I’ve seen it happen a hundred times: a page ranks #1 for months, then falls off a cliff. In 2026, recency is a core ranking signal for AI citations.

- The 90-Day Review: Set a cycle to review high-traffic pages every 90 to 120 days. Content updated this frequently maintains its ranking 4.2 positions higher than static content.

- Last Updated Timestamps: Explicitly show both the original publication date and a “Last Updated” date. This signals to Google’s algorithms (and your users) that the information hasn’t been neglected.

- Update Your Stats: Refresh your screenshots, statistics, and industry examples regularly. Pages with the most content changes are crawled more frequently by search bots.

Multimodal Integration: Prepping for Gemini and Beyond

The search experience is no longer just text. Google’s multimodal models, like Gemini, can “think” in a unified space where text, images, and video coexist.

- Multisensory Context: Embed high-value images, infographics, or short videos directly near your FAQs.

- Citing Specific Moments: If you include a video, the AI can now refer to specific timestamps within that video to answer a user’s question.

- Multimodal Selection Rate: Pages that mix text, video, and schema see a significantly better selection rate in AI results.

Mobile and Voice: The “Concise” Requirement

Most voice search queries are performed on mobile devices. To win here, your FAQs must be snappy.

- Conversational Tone: Use contractions (like “you’ll” or “we’re”) and keep your sentences short and clear. Imagine your content being read aloud does it sound like a friendly expert or a textbook?

- Targeting “Position Zero”: Structure your answers to be under 50 words to increase your chances of becoming the “Featured Snippet” used by voice assistants.

Scaling Without Losing Your Soul

Finally, let’s talk about scaling. You can use AI to identify common questions from tools like Search Console or People Also Ask, but don’t let the AI do the final writing.

The “Zumeirah Approach” is a hybrid model and let AI handle the data gathering and initial outlining, but always have a human expert add the original research, case studies, and “first-person” experience that an LLM simply cannot replicate. AI can summarize facts, but it can’t share the “lived experience” of a 20-year industry veteran.

Common Mistakes to Avoid

The line between “optimizing” and “breaking things” is thinner than you think. I’ve seen 20-year veterans get a little too clever with their schema and end up in the algorithmic equivalent of the “naughty corner.”

If you want to outrank the big blogs, you have to be precise. One misplaced comma or one “over-engineered” answer can turn your competitive advantage into a visibility-killing penalty. Here is what you need to avoid to stay in the AI’s good graces.

The “Over-Optimization” Trap

We’ve all been there like you find a tactic that works, so you decide to do it a hundred times. But in 2026, thin or spammy FAQs are a one-way ticket to a manual action or, worse, a quiet algorithmic suppression.

- Content Mismatch: This is the #1 mistake. If your FAQ schema contains an answer that isn’t actually visible to the human user on the page, Google sees that as “hidden content.” It’s a violation of trust, and the AI will stop citing you entirely.

- The “Marketing” FAQ: If your FAQs read like a sales pitch (“Why is Zumeirah the best agency ever?”), the LLMs will filter you out. AI models are trained to look for factual utility. If you sound like an ad, you aren’t an authority; you’re just noise.

Technical Errors That Kill Your Crawl Budget

You know what? AI bots like ChatGPT and Gemini are surprisingly picky eaters. If your code is messy, they’ll just leave the table.

- Invalid Syntax: A single missing bracket in your JSON-LD can make the entire script unreadable. Always, and I mean always, use the Rich Results Test before you push to production.

- Missing Required Fields: Schema.org has “Required” and “Recommended” fields. If you skip the

AcceptedAnswerproperty or leave a name field blank, your schema is essentially invisible to the machine. - The Mobile Rendering Blind Spot: Over 60% of AI-driven searches happen on mobile. If your FAQ accordions are broken or slow on a phone, the AI won’t cite you because it knows the user experience will be garbage.

Ignoring the Data: Don’t Fly Blind

Honestly, if you aren’t tracking your AI citations, you’re just guessing. Most SEOs still look at “Average Position” in Search Console and think they’re winning. But in 2026, you can be #1 in the blue links and still lose 80% of the traffic to an AI Overview that cites your competitor.

- The GSC “Blind Spot”: Google Search Console currently merges AI impressions with standard search data. You need to use a “heuristic approach” looking for those 10+ word, conversational queries to identify where you are likely winning AI territory.

- New KPIs for 2026: Start tracking Citation Frequency (how often the AI credits you) and Sentiment Analysis (how the AI describes your brand). Tools like Wellows or Ahrefs’ new GEO trackers are becoming essential for this.

The “Competitor Ghosting” Mistake

You aren’t ranking in a vacuum. Your competitors like the Yoasts and the Search Engine Lands are also playing this game.

- Reverse-Engineer the Winners: If you see a competitor consistently winning the “AI Citation” for your target keyword, look at their schema. Are they using more specific entities? Is their answer length closer to that 40-60 word sweet spot?

- Find the “Citation Gaps”: Use AI visibility tools to see which topics your competitors dominate and where the AI is struggling to find a clear answer. That’s your opening.

| Practice | Risk Level | Result |

| Hiding schema-only content | Critical | Potential manual penalty & de-indexing |

| Writing factual, 50-word answers | Low | 2.7x higher citation rate |

| Automated, unedited AI FAQs | High | Loss of E-E-A-T and trust score |

| Nesting FAQ with How-To Schema | Low | Improved multimodal visibility |

Case Studies and Examples: Seeing the 2026 Shift in Action

Honestly, I’ve seen enough “SEO hacks” over the last twenty years to fill a stadium. Most of them are just flashes in the pan. But when we talk about FAQ Schema for AI Overviews, the data is finally starting to catch up with the theory.

We’re moving beyond the “I think this works” phase and into the “here is the proof” phase.

At Zumeirah, we’ve been tracking these shifts since the early generative engine betas, and let me tell you, the difference between a page that is “optimized” and one that is “machine-readable” is staggering.

Real-World Success: From Invisible to Essential

Let’s look at a hypothetical but very realistic case study based on what we’re seeing in the 2026 search landscape.

The E-commerce Pivot

Imagine a mid-sized e-commerce brand selling high-end, sustainable office furniture. They had great product descriptions, but their traffic was stagnant because Google’s AI Overviews were answering all the “Top 5 Ergonomic Chairs” questions for them.

They decided to overhaul their product pages. Instead of just a list of specs, they added a dedicated “Buyer Intent FAQ” section. They used questions like “What is the weight capacity of the AeroChair 500?” and “How does sustainable mesh compare to traditional leather for back support?”

The result? By wrapping these in FAQPage schema, they didn’t just get a pretty rich snippet. They became the primary citation for three major AI Overviews in their niche.

Their organic click-through rate (CTR) jumped from a measly 0.6% to 1.08%. You might think, “That’s less than 1%!” but in the zero-click world of 2026, that’s a huge win. They stopped being “just another option” and became “the answer.”

The How-To Authority

Take a tech blog that focuses on SaaS tutorials. They were getting decent traffic but weren’t seeing much “brand recognition.” They started implementing “How-To” and “FAQ” schema combinations like what we call Structured Data Stacking.

They didn’t just write for humans; they built a “knowledge base” for the AI. By being ultra-specific with their data adding stats every 150 words they saw their AI citation frequency skyrocket.

The Metrics That Actually Matter

If you’re still looking at “average position,” you’re living in 2015. In 2026, we track Citation Lift.

Look at the research from SE Ranking. Their analysis showed that pages featuring clear FAQ blocks within the main content averaged 4.9 citations, compared to just 4.4 for pages without them.

| Metric | Before Optimization | After FAQ Schema & Content Structuring |

| AI Citation Rate | 15% | 41% |

| Citation Velocity | Low | High (Weekly refreshes) |

| Visibility Score | 3.6 citations | 6.5 citations |

| Engagement | 0.6% CTR | 1.08% CTR |

That’s a 2.7x higher citation rate just for taking the time to organize your expertise into a format the machine can actually understand.

Honestly, if I told you ten years ago that adding a bit of JSON-LD would nearly triple your visibility, you’d have called me a snake oil salesman. Today, it’s just good business.

Industry-Specific Tips: Not All FAQs Are Created Equal

You can’t just copy-paste your strategy from an e-commerce site to a SaaS platform. Each industry has its own “citation fingerprint.”

- For E-commerce: Focus on “Comparison FAQs.” LLMs love to synthesize data. If you provide a direct comparison between your product and a common alternative (using factual, non-promotional language), you’re 3x more likely to be the “source of truth” in a comparison AI Overview.

- For SaaS Brands: You’re in a “high-trust” game. Your buyers are in 3 to 12-month research cycles. Use your FAQs to answer the “uncomfortable” questions like pricing transparency, integration hurdles, and security protocols. This builds E-E-A-T that an LLM can actually verify against other sources.

- For Content & News Sites: Speed is your best friend. In 2026, the “Last Updated” signal is massive. A page updated in the last two months gets an average of 5.0 citations, whereas one left alone for two years drops to 3.9. Use FAQs to provide “Information Gain” original data or unique insights that the AI hasn’t seen a thousand times before.

Let me give you a quick “pro tip” from the trenches: AI systems often extract info from individual sections rather than the whole article.

So, make sure each FAQ question and answer can stand completely on its own. If someone reads that one block, do they get the full value? If the answer is yes, the AI is much more likely to grab it.

Tools and Resources for FAQ Schema Optimization: Your 2026 Toolkit

I’ve seen people try to manage schema with just a text editor and a prayer. Back in 2005, maybe you could get away with that. But in 2026, with AI models like Gemini and SearchGPT crawling your site every few hours, you need a proper stack.

If your “technical handshake” is broken, you aren’t just losing a rich snippet; you’re losing your seat at the AI’s table.

Here is the “Zumeirah-approved” list of tools that actually move the needle for Generative Engine Optimization (GEO).

The Essentials: Free Tools for the Modern Web

You don’t always need a massive budget to win. In fact, some of the best tools for validating your work are completely free.

- Google’s Rich Results Test: This is your primary “pass/fail” tool. It tells you exactly how Google sees your FAQ schema and if you’re eligible for those high-value AI citations.

- Schema Markup Validator: While Google’s tool focuses on Google’s requirements, this one checks against the global Schema.org standards. Use it for a deeper, more technical “under the hood” look.

- Google’s Structured Data Markup Helper: If you aren’t a coder, this is a lifesaver. You just highlight the text on your page, and it spits out the JSON-LD code for you.

The Heavy Hitters: Paid Tools for Scaling

When you’re managing thousands of pages, you need more than just a validator. You need intelligence.

- Ahrefs & SEMrush: These are no longer just keyword tools. In 2026, they are entity trackers. Use Ahrefs’ “Site Audit” to find missing schema at scale, or SEMrush’s “Keyword Magic Tool” to identify the “People Also Ask” clusters that are currently triggering AI Overviews.

- Screaming Frog SEO Spider: Honestly, if you’re a technical SEO, this is your Swiss Army knife. You can crawl your entire site and bulk-export every single page that’s missing

FAQPagemarkup or has a syntax error. - OmniSEO® or Perplexity Pro: These are the new kids on the block for 2026. They allow you to track your “AI Visibility” actually seeing how often your brand is cited inside ChatGPT or Gemini responses.

The Hybrid Approach: AI Tools (With a Human Soul)

Let’s be clear: you should use AI to assist your workflow, not run it.

- ChatGPT (GPT-5/Search): It’s incredible for drafting initial FAQs based on your page content. But remember our “human-centric” rules never post the raw output. Use it to find the semantic gaps, then have a human editor (like us) add the “first-person” nuance that LLMs can’t fake.

- Claude 3.5/4: Often better than ChatGPT at “reasoning” through complex technical explanations. If your FAQ is about a difficult concept, Claude is usually better at simplifying it without losing the technical precision.

Where the Experts Hang Out: 2026 Communities

The search landscape is moving so fast that blogs can’t always keep up. You need to be where the real-time experiments are happening.

- Reddit (r/SEO & r/GenEngineOptimization): This is where the real “in the trenches” testing happens. If a new Google update breaks FAQ schema, you’ll hear about it here first.

- LinkedIn Specialist Groups: Follow the folks who are actually building the LLM-focused strategies. It’s where you’ll find the expert narrative and the high-level “strategic shifts” for 2026.

- Google Search Central: The “Mothership.” Always keep an eye on their official documentation and blog for the latest on what they consider a “helpful” FAQ.

Measuring Success and Iterating: The Feedback Loop of 2026

Honestly, if you aren’t measuring your results in 2026, you’re basically throwing darts in a dark room and hoping one hits the board. The old days of checking your rank for a single “seed” keyword and calling it a day? Those are long gone.

In the age of AI Overviews and LLMs, success looks a lot more like a “citation map” than a simple list of numbers.

If you want to stay ahead of the curve, you need to track how the machines are talking about you. Here is the Zumeirah Framework for measuring and iterating on your FAQ schema.

The Key Metrics That Actually Matter

“Impressions” in Search Console are great, but they don’t tell the whole story anymore. You need to look at AI Visibility.

- AI Overview Appearances: How often does your brand pop up in the generative summary? This is the new “Position Zero.”

- Citation Rate: This is the big one. Out of 100 queries in your niche, how many times does the AI explicitly cite you as a source? Recent research shows that properly implemented FAQ schema can push this citation rate up to 41%, compared to a measly 15% for those without it.

- Organic AI Referrals: This is the traffic that actually clicks through from a ChatGPT link or a Perplexity source card.

- Sentiment and Position: Is the AI framing your brand as the “top choice” or just “another option”? And are you cited in the first paragraph or buried at the bottom?

How to Track Success (Without Losing Your Mind)

You know what? Google Search Console is still a bit of a “black box” when it comes to AI. It lumps AI Overview traffic into your general search data. But we have ways around that.

1. The GA4 “AI Referral” Custom Channel You need to set up a custom channel group in Google Analytics 4. By using a regex filter (basically a fancy search pattern), you can group traffic from chatgpt.com, gemini.google.com, and perplexity.ai into a single “AI Referral” bucket. This lets you see exactly how many people are coming to your site because an AI told them to.

2. The GSC “10-Word Query” Heuristic Here’s a pro tip from the trenches: AI Overviews are mostly triggered by long, conversational queries. Go into your Search Console, filter for queries longer than 10 words, and look for terms like “how,” “why,” or “best vs.” If your impressions for these long-tail queries are going up, your FAQ strategy is working.

The Iteration Cycle: Why Your Schema Is Never “Finished”

I’ve been doing this for 20 years, and if there’s one thing I know, it’s that the internet doesn’t stand still. What worked in January 2026 might be outdated by July.

- A/B Test Your FAQ Variations: Try two different versions of a “How-To” block on similar pages. Does a 40-word answer get cited more often than a 60-word one? Data suggests the 40-60 word sweet spot is the winner for most LLMs.

- Monitor LLM Updates: Every time OpenAI or Google drops a new model update, the way they “ingest” data changes. Stay flexible. If you notice a sudden drop in citations, it’s time to revisit your technical structure.

- The 90-Day Freshness Check: AI models love “fresh” facts. Make it a habit to update your FAQ answers every 90 days with new stats, recent dates, or “Updated for 2026” signals.

Conclusion: Navigating the New Frontier of Search

If you’ve made it this far, you’re already ahead of about 90% of the people still trying to do SEO like it’s 2018. We’ve moved past the era of simple keyword matching and stepped into a world where Generative Engine Optimization (GEO) is the name of the game.

The search engines of 2026 don’t just want to show a link; they want to provide an answer. And while that might feel like a threat to your traffic, it’s actually the single biggest opportunity for growth we’ve seen in a decade.

If you can position your brand as the “source of truth” that the AI trusts, you won’t just be ranking you’ll be essential.

The Recap: Your AI Visibility Toolkit

If I had to boil down the last 4,000 words into a few key takeaways (and let’s face it, we all love a good summary), here is what matters most for your FAQ schema strategy:

- Structure is the Handshake: FAQ schema isn’t just about pretty rich snippets. It’s the technical language you use to tell LLMs like Gemini and ChatGPT, “Here is the fact you are looking for.”

- The 40-60 Word Sweet Spot: AI models have specific appetites. Keep your answers concise, factual, and direct to maximize your citation rate.

- Freshness is a Ranking Factor: In 2026, old data is dead data. Regularly updating your FAQs with new stats and “Last Updated” timestamps is a massive signal of authority.

- Multi-Layered Context: Don’t just stop at FAQ schema. Combine it with

HowTo,Article, andProductschemas to give the machine a 360-degree view of your expertise.

The Call to Action: Stop Reading and Start Auditing

You know what? Reading a guide is easy. Implementing it is where the real work happens. If you want to see a lift in your AI citations and organic traffic for Zumeirah (or any brand you’re building), you need to get your hands dirty.

- Audit Your Content: Use tools like Screaming Frog to find your high-traffic pages that are missing schema.

- Research Real Questions: Look at Reddit, Quora, and “People Also Ask” to find the natural language questions your audience is actually asking right now.

- Deploy and Validate: Don’t just push the code and walk away. Use the Rich Results Test to ensure your technical handshake is perfect.

- Monitor the Results: Set up those custom GA4 channels. Track your citations in Perplexity. See where you are winning and where you are invisible.

Final Thoughts: Quality is Still the King

You can have the most perfect, error-free JSON-LD code in the world, but if the content inside it is garbage, the AI will eventually figure it out. Schema is the bridge, but quality content and user-centric design are the foundation.

Search engines are getting smarter every day. They are learning to recognize human expertise, genuine experience, and real authority.

So, while we optimize for the machines, we must never forget that we are writing for people. The brands that win in 2026 will be the ones that provide the most value, the most clarity, and the most trust no matter if that answer is read by a human on a screen or spoken by an AI assistant.

Honestly, the future of search is here. It’s conversational, it’s structured, and it’s faster than ever. Now, go out there and make sure your brand is the one giving the answers.

Frequently Asked Questions: Mastering the AI-First Web

Honestly, after talking about schema for over 20 years, I know the same few questions always pop up. To make sure you’re walking away with a clear blueprint, I’ve put together the most critical “need-to-know” items. Consider this your quick-reference guide for staying ahead of the 2026 search curve.

Is FAQ schema still worth the effort in 2026?

Absolutely. While Google has pulled back on showing “rich snippets” (those expandable dropdowns) for many sites, the backend value has never been higher. In 2026, FAQ schema is a primary signal for AI Overviews (AEO) and Generative Engine Optimization (GEO). It helps LLMs parse your facts with high confidence, leading to a significantly higher citation rate.

How long should my FAQ answers be for AI citations?

The “sweet spot” in 2026 is between 40 and 60 words. You know what? LLMs are like goldilocks like they don’t want answers that are too short (lacking context) or too long (too hard to extract). Aim for a direct, declarative first sentence followed by one or two sentences of specific, fact-dense detail or data.

Can I use FAQ schema for marketing or promotional content?

Don’t do it. Google and modern AI models are incredibly good at sniffing out “sales-y” fluff. If your FAQ looks like an ad, it will be ignored or even penalized. Stick to objective, helpful, and factual answers that provide genuine value to the user. Trust is the currency of AI search; don’t spend it on a sales pitch.

How many FAQs should I include on a single page?

For a deep-dive article (like this one), 5 to 7 high-impact FAQs is the gold standard. If you’re writing a shorter piece, 3 to 5 is plenty. You want to cover the most relevant “intent clusters” without cluttering the page. It’s about quality over quantity and five authoritative answers are better than twelve thin ones.

Does FAQ schema help with voice search and LLMs like ChatGPT?

Yes, and it’s actually one of the best ways to win those spots. Voice assistants and LLMs rely on structured “question-answer” pairs to generate their spoken or text responses. By using JSON-LD markup, you’re making it easy for the “brain” of the AI to find, verify, and read your content aloud to the user.

Should I combine FAQ schema with other types of structured data?

Honestly, this is the “pro move” for 2026. We call it Schema Stacking. By nesting your FAQPage schema with HowTo, Article, or LocalBusiness markup, you’re providing a multi-dimensional map of your content. This layered context helps AI models understand the “who, what, and how” of your brand all at once.