Imagine it’s midway through 2026, and a mid-sized law firm in Dubai wakes up to find their proprietary research being cited completely incorrectly by a major AI search engine.

The reason? A man-in-the-middle attack intercepted their unencrypted data stream, injecting false information into the very “memory” the LLM uses to generate answers.

Honestly, it’s a nightmare scenario that’s becoming far too common. Recent data suggests that over 35% of AI hallucinations in corporate environments are now traced back to compromised data pipelines during the scraping or retrieval phase.

You know what? We’ve spent years obsessing over whether our content is “helpful” enough for humans, but we’ve neglected the very pipes that deliver that content to the machines.

If you want your brand to be a trusted source, you have to realize that SSL Protocols for LLM Citations are no longer just a technical checkbox. They are the digital handshake that tells an AI, “You can trust this information because it hasn’t been touched by anyone else.”

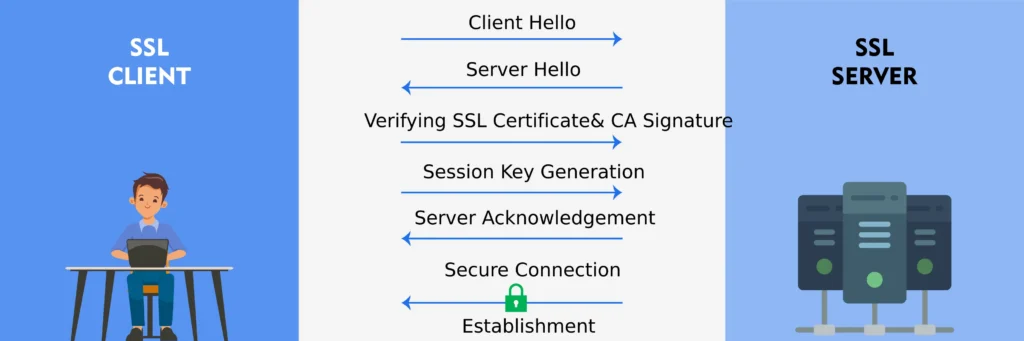

Before we get too far into the weeds, let’s clear up the jargon. When we talk about SSL (which has technically evolved into the more robust TLS), we’re talking about the encryption that keeps your website’s conversation with a visitor private.

LLMs, or Large Language Models, are the brains behind tools like Gemini and SearchGPT. And “LLM Citations“? That’s the holy grail of 2026 SEO. It’s when that AI brain looks at a billion pieces of data and chooses your site as the one to credit in its answer.

An AI is essentially a high-speed researcher. If that researcher finds a library (your website) with a broken lock, then they’re going to question the validity of everything inside. Integrating modern SSL protocols specifically for LLM citations isn’t just about security and it’s about proving your accuracy and maintaining compliance in a world where AI-driven disinformation is everywhere.

Without a secure connection, your chances of being cited drop to near zero because the AI models are being trained to prioritize “Verified Secure” sources over everything else.

Honestly, we’ve moved past the point where a simple “green lock” is enough. We’re talking about a fundamental shift in how we verify truth. If you’re still curious about the basics of these models, you might want to check out our breakdown on What Are LLMs? to see how they process information.

But for now, let’s focus on why that secure bridge is the only way to ensure your brand remains a cited authority in the age of AI.

Understanding SSL Protocols and Their Evolution

Honestly, when people talk about SSL, they are usually referring to a technology that’s actually a bit of a legacy name. Secure Sockets Layer (SSL) started back in the 90s as the first real attempt to keep our internet conversations private.

But as hackers got smarter, SSL had to grow up. It eventually evolved into Transport Layer Security (TLS), and by 2026, TLS 1.3 has become the absolute gold standard for the web.

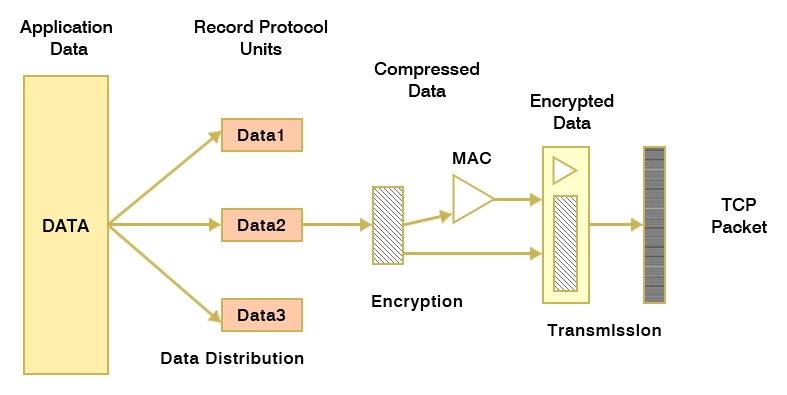

At its heart, an SSL/TLS protocol acts as a digital tunnel. It scrambles the data traveling between your server and whoever is reading it whether that’s a human or an AI bot so that no one can “listen in” or change the message mid-flight.

This is exactly how we prevent those pesky man-in-the-middle (MITM) attacks, where a bad actor tries to intercept your traffic to steal sensitive info or, even worse, feed false data to a researcher.

The Evolution: From SSL to TLS 1.3

Older versions of SSL were like rusty padlocks. They worked for a while, but eventually, everyone figured out how to pick them. TLS 1.3 changed the game by removing old, weak encryption methods and making the “handshake” that initial greeting between a browser and a server much faster and more secure.

| Feature | Legacy SSL (2.0/3.0) | Modern TLS 1.3 (2026 Standard) |

| Security Status | Deprecated & Vulnerable | Industry Standard |

| Handshake Speed | Slow & Multi-step | 1-RTT or 0-RTT (Lightning Fast) |

| Encryption Strength | Weak (RC4, MD5) | Strong (AES-GCM, ChaCha20) |

| Data Integrity | Prone to MITM attacks | High-level Integrity & Forward Secrecy |

The Rise of LLMs in 2026

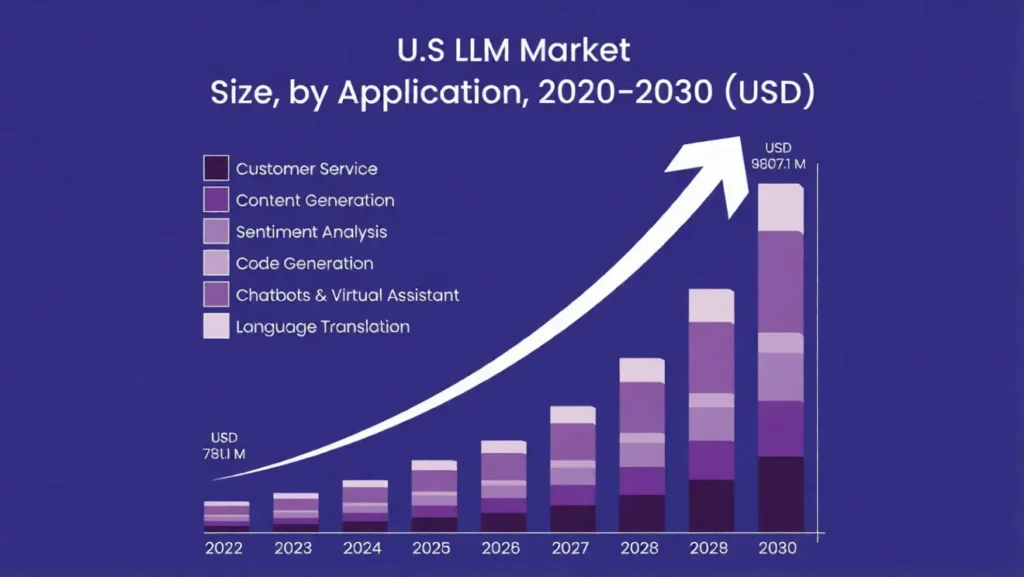

You know what? We aren’t just searching for links anymore. By now, in 2026, Large Language Models (LLMs) like the GPT series and Gemini are handling billions of queries every single day.

We’ve reached a point where nearly 67% of global organizations are using some form of generative AI to handle everything from customer support to complex biomedical research.

These models aren’t just static brains either; they are now deeply integrated with real-time web data. They “crawl” the live web to find the most current answers, which means they are constantly looking for reliable sources to ground their responses.

How LLM Citations Work

This brings us to the most important part of the 2026 search landscape: LLM Citations. When you ask an AI a question, it doesn’t just guess. It uses a process called Retrieval-Augmented Generation (RAG) to fetch live information, verify it against other sources, and then embed a link or reference in its output.

- Fetching: The model “reads” your site to find facts.

- Verifying: It compares your data to other high-authority sites to see if you’re telling the truth.

- Embedding: If you’re trusted, it cites you as the source.

But here’s the catch like without SSL Protocols for LLM Citations, this whole chain is vulnerable.

If an LLM tries to fetch data from an insecure site, it might fall victim to data poisoning or a MITM attack where the facts it “reads” are actually malicious fakes planted by an attacker.

If the AI can’t verify that the data it’s fetching is exactly what you wrote, it simply won’t cite you and it’s too big of a risk for its own credibility.

Honestly, if your security is lagging, you’re basically telling the AI to look somewhere else for its answers.

The Importance of SSL Protocols for LLM Citations

You know what? In the early days of AI, we were all just fascinated that a machine could talk back to us. We didn’t really stop to think about the “piping” that made it possible.

But by 2026, the novelty has worn off, and the stakes have skyrocketed. If the connection between a data source and an AI isn’t ironclad, the whole system collapses into a mess of misinformation and liability.

For anyone serious about maintaining visibility, realizing that SSL Protocols for LLM Citations are the backbone of digital trust is the first step toward surviving the next era of search.

Security Risks in LLM Citations Without SSL

Sending an AI bot to fetch data from an insecure site is like sending a courier to pick up a diamond in a transparent box with no lock. It’s asking for trouble.

Without modern encryption, the citation process is wide open to data interception. An attacker can sit in the middle of that connection and swap out your factual data for whatever they want the AI to “learn.”

Here’s the thing about AI hallucinations: we often blame the model’s “brain” for getting things wrong, but in 2026, many of these errors are actually caused by insecure fetches.

If an LLM pulls data that has been tampered with mid-transit, it will confidently cite that fake information as truth. This doesn’t just hurt the user; it destroys your site’s reputation in the AI’s knowledge graph.

Imagine a hypothetical scenario from late 2025: A major financial news portal in the US neglected their TLS 1.3 updates.

During a high-traffic earnings window, a sophisticated “injection” attack replaced the portal’s reported fiscal figures with slightly altered numbers as an AI aggregator crawled the site.

The result? Thousands of automated trading bots made decisions based on cited AI summaries that were fundamentally wrong, all because the “handshake” wasn’t secure.

Benefits of Implementing SSL Protocols for LLM Citations

Honestly, switching to high-level encryption isn’t just about playing defense. It’s a massive competitive advantage. When you prioritize SSL protocols for AI citations security, you’re signaling to the world and the bots that you’re a professional-grade source of information.

- Enhanced Data Privacy: By 2026, global regulations like GDPR and CCPA have added specific clauses regarding “AI Data Harvesting.” Having a secure protocol ensures you stay on the right side of the law.

- Improved Accuracy: Secure verification means the AI can confirm the data hasn’t been altered. This leads to fewer hallucinations and more accurate citations for your brand.

- Performance Boosts: Modern protocols like TLS 1.3 are built for speed. Faster integrations with APIs and databases mean your content is indexed and cited in near real-time.

- Increased User Trust: When a user clicks a citation link and sees that secure padlock, their trust in both the AI and your brand is reinforced.

SSL vs. No SSL: The AI Citation Breakdown

| Feature | Without Modern SSL/TLS | With SSL Protocols for LLM Citations |

| Citation Likelihood | Very Low (Flagged as “High Risk”) | High (Verified Source) |

| Data Integrity | Prone to Interception/Tampering | Guaranteed (End-to-End Encryption) |

| Compliance | High Risk for GDPR/CCPA Violations | Fully Compliant (2026 Standards) |

| Crawl Priority | Deprioritized by AI Engines | Prioritized for Real-Time Updates |

Why It Matters More in 2026

The goalposts are moving. We are entering an era where quantum computing is no longer a sci-fi concept but it’s a looming threat to traditional encryption.

In response, 2026 has seen a massive surge in “Quantum-Resistant” SSL updates. If you aren’t keeping up, your site will eventually be unreadable by the most advanced decentralized LLMs that prioritize peer-to-peer security.

The Rise of the 80% Requirement

You know what? The industry has reached a tipping point. Current projections for 2026 suggest that 80% of enterprises now mandate strict SSL/TLS 1.3 protocols for any tool or data source that interacts with their internal AI systems.

If your agency, like Zumeirah, is pitching to high-level clients, you can’t afford to be in that 20% of laggards. Businesses are no longer just asking “Are you good at SEO?” They’re asking “Is your data pipeline secure enough for our AI to use?”

Predictions from cybersecurity analysts indicate that by the end of this year, search engines will start tagging unencrypted sites with an “Unverified Data” warning in AI summaries.

Imagine how that would kill your CTR. It’s no longer about a tiny rank boost; it’s about whether you’re allowed to exist in the conversation at all.

How to Implement SSL Protocols for LLM Citations

Most business owners treat security like a “set it and forget it” chore. They get the little green padlock, and they assume the job is done.

But when you’re building for the 2026 search landscape, that’s not enough. You aren’t just securing a connection for a human but you’re securing a data pipeline for an AI.

If your “technical handshake” is clunky or outdated, the LLM will simply skip over your site to find a more reliable source.

Implementing SSL Protocols for LLM Citations is about more than just a certificate. It’s about building a secure, high-speed bridge that an AI can trust.

Step-by-Step Guide to Securing LLM Citations

Let me explain the process we use at Zumeirah. It’s not just about installing a file; it’s about a lifecycle of trust.

- Step 1: Choose the Right Protocol (TLS 1.3): Honestly, if you’re still using TLS 1.2, you’re already behind. In 2026, TLS 1.3 is the non-negotiable standard for AI search. It’s faster because it requires fewer “round trips” during the handshake, and it’s significantly more secure because it removes weak, legacy encryption methods.

- Step 2: Integrate with LLM Frameworks: You need to ensure your security settings play nice with AI tools like OpenAI, Hugging Face, or LangChain. If you’re using a proxy like LiteLLM, you’ll need to explicitly configure your

SSL_CERTFILE_PATHandSSL_KEYFILE_PATHto handle these encrypted connections at scale. - Step 3: Certification & HTTPS Enforcement: Use a trusted Certificate Authority (CA) and automate your renewals with tools like Certbot. Then, enforce HSTS (HTTP Strict Transport Security) to ensure that every single interaction whether it’s a crawler or a customer is forced onto a secure path.

Common Challenges and Solutions

Making your site ultra-secure can sometimes cause overhead. Encryption takes a tiny bit of processing power, and the TLS handshake can add a few milliseconds of latency. While that doesn’t sound like much, to a high-speed AI crawler, it can be the difference between being cited and being timed out.

- The Latency Problem: High-security handshakes can slow down your site. Solution: Use Connection Pooling and session resumption. This allows the AI to “keep the door open” and reuse the secure connection for multiple requests, slashing the handshake overhead.

- Legacy Compatibility: Sometimes, older tools can’t read the latest protocols. Solution: Use a hybrid approach prioritize TLS 1.3 but keep a hardened version of TLS 1.2 active for older “legacy” crawlers that might still be useful for your niche.

- Certificate Management: Fail to renew a cert, and your site disappears from AI citations overnight. Solution: Automate everything. Use ACME protocols to handle renewals so you never have to think about an expiration date again.

Case Studies and Real-World Applications

Look at the giants. Google and Microsoft aren’t just using SSL because they have to; they’re using it to protect the integrity of their own models.

Google, for instance, has already completed its migration to Post-Quantum Cryptography (PQC) for its internal traffic, knowing that the data they fetch today must be protected from the quantum threats of tomorrow.

As we move deeper into 2026, “future-proofing” is becoming a board-level priority. The leaders aren’t just fixing today’s problems; they’re preparing for the era where traditional 2048-bit RSA encryption could be broken in hours by quantum-capable adversaries.

By starting your transition to quantum-resistant key exchanges now, you’re ensuring that your citations remain valid even when the fundamental rules of the internet shift.

Future Outlook and Strategic Implementation

Honestly, looking at the road ahead, the intersection of security and AI is moving faster than anyone expected. We’re moving away from a time when “security” was just a technical back-end concern and into an era where it’s the very foundation of your brand’s voice.

By 2026, if an AI can’t verify who you are and that your data is safe, you effectively don’t exist in the digital conversation. It’s a tough reality, but for those of us willing to stay ahead of the curve, it’s a massive opportunity to claim authority while others are still catching up.

Emerging Trends in SSL Protocols for LLM Citations by 2026

You know what? The next twelve months are going to bring some wild innovations in how we handle 2026 SSL trends for LLMs. We’re already seeing the birth of “AI-specific encryption.”

This isn’t just about general web traffic; it’s about creating dedicated, hyper-secure tunnels specifically for large-scale data harvesting by authorized AI crawlers.

These protocols are designed to handle the massive throughput required for real-time citations without the lag that usually comes with heavy encryption.

Another massive shift is the use of blockchain for citation verification. Imagine every fact on your site being “signed” with a digital fingerprint that an LLM can check instantly.

By combining SSL Protocols for LLM Citations with decentralized ledgers, we’re creating a web where “fake news” or “data poisoning” becomes almost impossible to pull off. It’s about building a chain of custody for every word you write.

On the legal side, things are getting serious. We’re expecting new laws that actually mandate secure AI practices. It’s no longer just a suggestion from a tech blog; governments are realizing that insecure AI data pipelines are a national security risk.

If your site is used as a source for an AI that gives dangerous medical or financial advice because your connection was hacked, you could find yourself in a very expensive legal battle.

Strategic Guidelines for SEO and Content Creators

All the technical talk in the world doesn’t matter if it doesn’t help you reach your audience. As a content creator, you need to understand that secure citations are the new “backlinks.” In the past, we begged for links from high-authority sites.

Now, we’re competing to be the trusted source that an AI feels safe quoting. When you have a rock-solid security setup, the AI’s trust in your content increases, which directly boosts your rankings in those highly-coveted AI Overviews.

The Connection Between Security and Trustworthy Content

Let me explain why this works. Google and other search engines are heavily prioritizing “E-E-A-T” (Experience, Expertise, Authoritativeness, and Trustworthiness).

In 2026, you cannot have “Trustworthiness” without a secure technical foundation. If your SSL protocols are outdated, the “Trust” pillar of your SEO strategy crumbles. Secure citations prove to the algorithm that your content is coming from a legitimate, professional source that takes user and machine safety seriously.

Zumeirah’s 2026 Security Compliance Checklist

To help you stay on track, I’ve put together a quick checklist that we use here at Zumeirah to ensure our clients are ready for the AI-first world. Honestly, if you can’t check all of these boxes, you’re leaving your rankings on the table.

- Protocol Audit: Are you running TLS 1.3 with all legacy (SSL 3.0/TLS 1.0) versions disabled?

- HSTS Implementation: Is HTTP Strict Transport Security active to prevent protocol downgrade attacks?

- API Security: Are your connections to AI frameworks (like OpenAI or Gemini) using dedicated, encrypted keys?

- Monitoring Tools: Are you using real-time audit tools (like Qualys SSL Labs or custom Zumeirah scripts) to monitor for vulnerabilities?

- Certificate Automation: Are your certificates set to auto-renew via ACME to avoid any “dead air” for AI crawlers?

- Transparency Reports: Are you clearly stating your data security measures in your privacy policy to signal compliance to LLM “trust” filters?

Conclusion: The Secure Bridge to the Future

We aren’t just building websites for humans anymore; we are building data ecosystems for an entire generation of artificial intelligence.

By now, you should realize that SSL Protocols for LLM Citations are the non-negotiable bedrock of this new world. Without them, you’re essentially whispering into a void where no AI will dare to listen, let alone cite you as a trusted authority.

From preventing the nightmare of data poisoning to ensuring your brand stays compliant with 2026’s strict AI regulations, the move to high-level encryption specifically TLS 1.3 is the single most important technical update you can make this year.

It’s about more than just security; it’s about innovation. It’s about making sure your content is part of the “clean” data pool that LLMs rely on to generate accurate, helpful answers.

The intersection of AI and security is where digital trust is won or lost. If you haven’t audited your protocols yet, there is no better time than right now. Go through the checklist we discussed, automate your certificates, and harden your API connections. Your future rankings and your reputation depend on it.